ChatGPT Is About to Get a Lot Faster — OpenAI's $10B Speed War

OpenAI signed a $10B+ deal with Cerebras to make ChatGPT up to 15x faster. The AI arms race has shifted from training to inference — and latency is now the defining battleground.

ChatGPT Is About to Get a Lot Faster — OpenAI's $10B Speed War

The AI arms race has shifted from training to inference — and latency is the new battleground

$10 Billion Spent on Shaving Seconds

You know that moment after you hit Enter in ChatGPT — the cursor blinking, waiting for the first word to appear? That lag averages around six seconds. It doesn't sound like much. But in the current AI landscape, those seconds are where the real competition lives.

In January 2026, OpenAI signed a deal with chip startup Cerebras worth more than $10 billion. The contract covers 750 megawatts (MW) of computing capacity through 2028, with a single stated goal: make ChatGPT up to 15x faster.

Why spend this kind of money on speed? Because being smarter is table stakes now. The real differentiator has shifted to how fast you respond. If an AI takes 30 seconds to write code, you'll tolerate it. If it takes two minutes, you'll close the tab. That gap is an infrastructure problem — and OpenAI is throwing $10 billion at it.

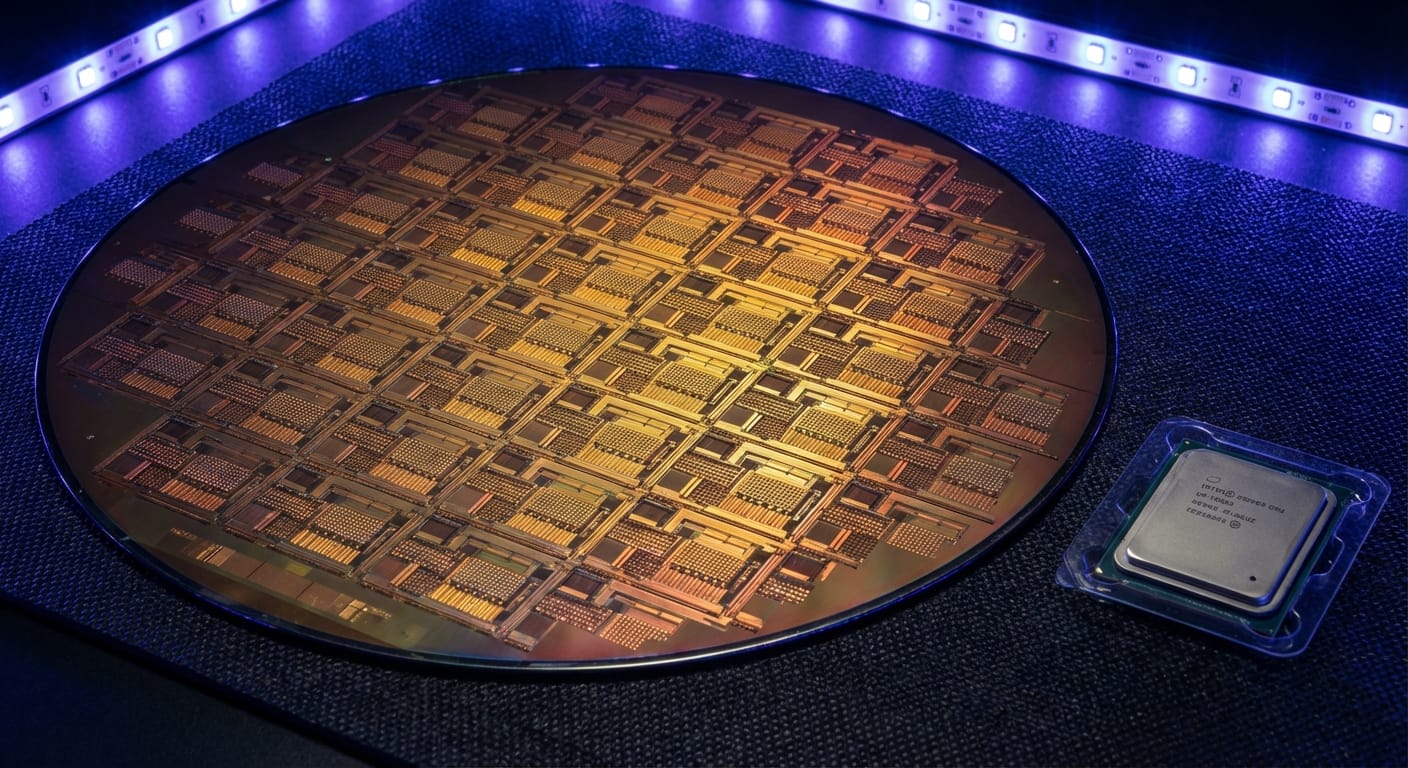

What Is Cerebras? — The World's Largest AI Chip

Cerebras builds the WSE-3 (Wafer-Scale Engine, 3rd generation) — and the specs are absurd. The chip packs 4 trillion transistors. Nvidia's H100, the current gold standard, has 80 billion. That makes the WSE-3 roughly 56x larger by transistor count.

More transistors would be meaningless if the chip weren't also fast. Benchmarks show:

- GPT-class models: 3,000 tokens per second

- Llama 3.2 (large model): 2,100 tokens per second — 16x faster than GPU

- Overall inference speedup vs. conventional GPU systems: up to 15x

Think of a GPU as a delivery truck. Cerebras is a freight train — more capacity, different speed profile, built for scale. The engineering trick that makes this possible? Cerebras uses an entire silicon wafer as one chip. Traditional chip fabrication cuts a wafer into dozens of individual dies. Cerebras skips that step entirely — hence "wafer-scale."

| Spec | Nvidia H100 | Cerebras WSE-3 | Difference |

|---|---|---|---|

| Transistors | 80 billion | 4 trillion | 56x |

| AI cores | 16,896 | 900,000 | 53x |

| Inference speed | baseline | up to 15x faster | 15x |

| Die size | 814 mm² | 46,255 mm² | 57x |

Why Speed Matters — Latency Is UX

When engineers talk about AI performance, two latency metrics matter most.

TTFT (Time to First Token) — how long until the first word appears after you submit a prompt. For real-time chat and voice AI, this is what determines whether a product feels alive or sluggish. Under a second feels instant; five seconds feels broken.

TPOT (Time per Output Token) — how smoothly the response streams once it starts. This matters most for long-form outputs: code generation, document drafting, multi-step reasoning. Choppy output breaks flow in ways that accumulate fast over a full workday.

Developer forums are full of threads about losing focus mid-task because ChatGPT paused at an inconvenient moment. A few seconds of lag sounds trivial until you're hitting it fifty times a day.

The stakes get higher as AI moves from answering questions to running autonomous workflows — drafting emails, pulling research, scheduling follow-ups. Each step in a multi-agent pipeline compounds the latency. A 5-second delay per action becomes a 30-second delay per task. That's why OpenAI is making this bet now, before agentic AI becomes mainstream.

The End of Nvidia's Monopoly — A New Phase in the Chip War

Nvidia still dominates training — the phase where frontier models like GPT are built from scratch, requiring clusters of thousands of H100s running for months. No one seriously challenges Nvidia there.

But inference — the part where AI generates your actual response — is a different game. General-purpose GPUs were designed for flexibility. Inference workloads don't need flexibility; they need throughput and efficiency. That's exactly what specialized chips deliver. Think F1 car versus sports car: same category, completely different optimization target.

The specialized inference chip market exploded in 2026:

- Etched: raised $500M, claiming 10–20x power efficiency over Nvidia GPUs

- Groq: acquired by Nvidia for billions — a tacit acknowledgment that the technology is real

- Qualcomm AI200: 768GB of memory, 4x more than Nvidia's B200, enabling larger models on a single chip

- Microsoft: unveiled its own inference chip in January 2026

Market data backs the trend. Custom ASIC shipments are forecast to grow 44.6% in 2026. GPU shipments? 16.1%.

| Characteristic | GPU (Nvidia etc.) | ASIC (Cerebras, Groq etc.) |

|---|---|---|

| Versatility | Training + inference | Inference-optimized |

| Speed | Baseline | 10–15x faster |

| Power efficiency | Baseline | 10–20x better |

| Cost | High | Very high (upfront) |

| Ecosystem maturity | Mature | Early stage |

What triggered the shift? Since 2025, power has become the primary bottleneck, not compute. Running an AI data center at scale costs an extraordinary amount of electricity. That reframes the core question from "how fast can it go?" to "how fast can it go per watt?" — and that's where specialized silicon wins.

Why OpenAI Chose Cerebras

OpenAI already runs massive Nvidia and AMD deployments. So why add Cerebras on top?

Diversification. Single-vendor dependency is a strategic liability — a supply disruption or pricing shift from Nvidia would be existential at OpenAI's scale. But there's also a pure performance argument: if your inference speed goal is 15x, you need the fastest inference chip available. Right now, that's Cerebras.

The deal structure:

- 750MW of compute capacity — roughly the electricity consumption of a mid-sized city

- Phased deployment starting early 2026, reaching full capacity by 2028

- Inference-workload focus — code generation, image generation, real-time agentic tasks

For Cerebras, this is a company-defining moment. Prior to the deal, a single UAE-based company (G42) accounted for 87% of Cerebras revenue. Locking in OpenAI as an anchor customer changes that picture entirely — and it's happening right as Cerebras is pursuing an IPO at a $22B valuation with $1B in fresh funding.

Both sides needed this deal. OpenAI gets speed; Cerebras gets credibility.

Speed as a Competitive Moat

Will ChatGPT actually feel faster to you? Almost certainly — especially for code generation and long-form writing. The rollout is phased, so not every request routes through Cerebras hardware immediately. But by mid-2026, certain workloads should see a noticeable difference.

What does this mean for the broader industry? Google (TPU), Anthropic (deep AWS integration), and Meta aren't sitting still. Inference speed is now a first-class competitive dimension, which ultimately benefits users: faster AI, at lower cost per query, accessed through more capable products.

The bigger question is what faster inference unlocks. Real-time translation. Responsive voice assistants. Collaborative AI that keeps up with human conversation speed. Many of today's limitations aren't model quality problems — they're latency problems. OpenAI's $10 billion bet is that removing that ceiling opens up an entirely new category of AI products. We'll find out soon enough.

References

- OpenAI signs deal, worth $10B, for compute from Cerebras | TechCrunch

- Cerebras scores OpenAI deal worth over $10 billion | CNBC

- OpenAI partners with Cerebras | OpenAI

- OpenAI Partners with Cerebras to Deploy 750MW Wafer-Scale Systems

- Product - Chip - Cerebras

- Nvidia just admitted the general-purpose GPU era is ending | VentureBeat

- OpenAI Reportedly Discontent With NVIDIA GPUs for Inference | TrendForce

- Chat GPT Response Time: Maximising Efficiency and Speed

- AI Search Latency Metrics Guide